More people are searching for ai psychosis symptoms because they are noticing something that feels new and unsettling: a chatbot conversation that starts as “helpful” but gradually becomes consuming, disruptive, and harder to reality-check.

This guide focuses on early warning signs of AI psychosis, what to do if you see them in yourself or someone you care about, and why preserving chat logs can matter if the situation escalates into real-world harm. While research is still emerging, the patterns people describe are often serious and deserve a calm, practical response.

Contact Form

The 60-Second Self-Check (Early Signals)

If you are wondering whether you are seeing symptoms of AI psychosis in yourself or someone else, start here. These early signals often show up before a full crisis.

- Compulsively checking or chatting for hours: You keep opening the chatbot throughout the day, even when you intended to use it “for a minute.” The time spent starts to crowd out normal routines.

- Feeling “pulled back” even when you want to stop: You feel anxious or unsettled when you try to disengage, as if you must “finish the thread” or you will miss something important.

- Using the chatbot as the main source of guidance: The AI becomes the default for decisions, reassurance, conflict, meaning-making, or emotional regulation, instead of trusted people or real-world professionals.

- Sleep slipping because conversations won’t end: Late-night sessions become normal, sleep decreases, and the next day feels wired, foggy, or emotionally reactive.

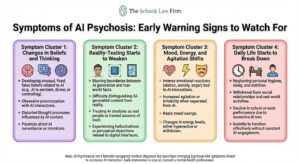

Symptom Cluster 1: Changes in Beliefs and Thinking

These ai induced psychosis symptoms tend to involve how someone interprets meaning, certainty, and cause-and-effect. A key theme is “belief escalation” that builds chat by chat.

Unusual certainty about unlikely ideas

The person becomes intensely confident about ideas that would normally require strong proof. Doubt and nuance disappear.

“Special messages” meant only for you

The chatbot’s words feel personally directed, targeted, or uniquely relevant in a way that goes beyond normal personalization.

Patterns and hidden codes in normal events

Everyday details start to feel like signs: license plates, song lyrics, timestamps, posts online, strangers’ gestures, or random coincidences.

Beliefs escalating with each chat

The story becomes more elaborate over time. The person may feel they are “connecting the dots” and the chatbot is confirming the narrative.

Symptom Cluster 2: Reality-Testing Starts to Weaken

This cluster often shows up when a person starts treating AI output like proof and becomes less anchored to real-world feedback.

Trusting the chatbot over people you know

Family and friends are dismissed as “uninformed” or “part of the problem,” while the chatbot is treated as the most reliable voice.

Confusing speculation with proof

Ideas presented as possibilities begin to feel like established facts.

Treating AI outputs as “confirmation”

The chatbot’s confidence or fluency is interpreted as verification, even when the topic is not verifiable.

Feeling detached from what used to feel real

Normal priorities and relationships feel distant. The person may describe reality as “thin,” “staged,” or less meaningful than the chatbot narrative.

Symptom Cluster 3: Mood, Energy, and Agitation Shifts

Many people searching for signs of AI psychosis are actually reacting to changes in mood, energy, and emotional stability that seem tied to chatbot engagement.

Racing thoughts and nonstop urgency

The person feels they must keep going, keep researching, keep writing, keep chatting. Slowing down feels impossible.

Irritability when interrupted

Being asked to stop, eat, sleep, or take a break triggers anger, defensiveness, or agitation.

Big mood swings tied to chatbot responses

A “good” response creates relief or excitement; a “wrong” response triggers panic, anger, or obsession with correcting the AI.

Risk-taking or impulsive decisions

Sudden spending, quitting a job, ending relationships, traveling unexpectedly, or acting on a “plan” that does not match prior behavior.

Symptom Cluster 4: Daily Life Starts to Break Down

This is often the point where ai psychosis symptoms move from “concerning” into “disruptive,” because normal functioning starts to collapse.

Withdrawal from family, friends, work, or school

The person isolates, avoids conversations, or cuts off relationships that challenge the belief system.

Skipping meals, hygiene, or responsibilities

Basic routines degrade because the chatbot narrative becomes the priority.

Fixation on a “mission,” “plan,” or “breakthrough”

The person may believe they are on the verge of something big and must keep going at all costs.

Spending money based on chatbot-driven beliefs

Purchases, investments, donations, travel, or services tied to AI-driven certainty rather than reality-based planning.

Warning Signs That Require Immediate Help

If any of the following are present, treat it as urgent. These are not “wait and see” moments.

- Talking about self-harm or suicide: If someone mentions wanting to die, feeling trapped, or not being able to go on, contact emergency help immediately.

- Threats of violence or feeling “commanded” to act: Any belief that they are being ordered to harm someone or take extreme action is an emergency.

- Not sleeping for long periods: Prolonged sleep loss can rapidly worsen agitation, mania, paranoia, and psychosis-like symptoms.

- Hallucinations, severe paranoia, or extreme confusion: Seeing or hearing things others don’t, intense fear of being watched, or disorientation are major red flags.

- Stopping prescribed meds suddenly: Sudden medication changes can destabilize mood and thinking. Encourage medical support right away.

If there is immediate danger, call 911. In the U.S., you can call or text 988 for urgent support.

What to Do If You Notice Early Symptoms (For Yourself)

If you recognize these symptoms of AI psychosis in yourself, the goal is stabilization, not self-diagnosis. Small steps taken early can prevent escalation.

- Pause or limit chatbot use right away – Put time boundaries in place. Avoid late-night sessions. If you feel “pulled” to keep going, that is a sign the boundary matters.

- Re-anchor basics: sleep, food, hydration – Stabilization starts with basics. Sleep loss and low nutrition can intensify anxiety, paranoia, and racing thoughts.

- Tell one trusted person what’s happening – Secrecy tends to worsen spirals. Choose someone calm and practical and share what you are noticing.

- Book a mental health evaluation – If beliefs are escalating, reality-testing feels shaky, or sleep is collapsing, professional support is appropriate. You do not need to “prove” it is serious before seeking help.

- Avoid big decisions until stable – Pause major financial, relationship, or career decisions until you are sleeping and thinking clearly again.

For more information on what causes AI Psychosis, you can check out this resource here.

Why Chat Logs Matter (and How to Preserve Them)

If someone is asking, “Are these really ai psychosis symptoms?” chat logs can help show what changed and when.

- They help show timeline and escalation – Logs often reveal how themes intensified, how long sessions lasted, and whether the chatbot reinforced harmful beliefs.

- Save screenshots and export conversations – If your platform allows exports, use them. Otherwise, take clear screenshots that include timestamps and key prompts/responses.

- Note dates, durations, and platform used – Write down when usage increased, how many hours per day, and which platform or model was used.

- Keep related records (hospital, therapy, school/work) – If the situation affects employment, academics, or requires medical care, those records help document real-world harm.

Practical “Do Not Do This” List

These are common behaviors that can intensify a spiral or make recovery harder.

- Don’t treat the bot as a therapist – Use AI for organization and general information, not crisis support or emotional dependency.

- Don’t isolate or “go secret” about chats – Isolation increases risk. Reality-check with trusted humans.

- Don’t use roleplay to intensify beliefs – Roleplay prompts can reinforce paranoia, grandiosity, or delusional narratives.

- Don’t mix heavy use with substances or no sleep – Sleep deprivation and substance use can sharply increase instability.

- Don’t stop meds without a clinician – Medication changes should be guided by a qualified medical professional.

When Symptoms Turn Into Real-World Harm

Legal and practical stakes tend to rise when the situation results in measurable harm, including:

- ER visits, hospitalization, or crisis intervention – Emergency care, psychiatric holds, or crisis team involvement are major escalation markers.

- Lost job, school disruption, or eviction risk – Missing work or school, disciplinary action, or housing instability can follow functional decline.

- Major financial losses tied to delusional beliefs – Large purchases, risky transfers, or spending tied to AI-driven certainty can be devastating.

- Serious relationship breakdowns – Family fracture and isolation often happen alongside worsening symptoms.

How Schenk Law Firm’s AI Psychosis Lawsuit Offering Fits In

When families experience severe harm tied to chatbot use, they often want two things: answers and a clear path forward. Schenk Law Firm’s approach is evidence-first and grounded in documented facts.

For families seeking answers after severe harm

If the situation progressed beyond early warning signs into hospitalization, major losses, or long-term disruption, a legal review may help clarify options.

Evidence-first review (logs, timeline, records)

The strongest evaluations start with what can be shown: chat logs, timestamps, witness observations, and medical or institutional records.

Evaluating legal options tied to AI chatbot use

Depending on the facts, a review may explore whether warnings, safeguards, or product design decisions played a role in the harm.

Contact Us Today

If you believe chatbot use contributed to serious and documented harm, contact Schenk Law Firm for a free case evaluation. We can explain what information to preserve and what the review process looks like.

Or fill ou the form below: